<srcIP, srcPort, dstIP, dstPort, protocol>.

The TDG is the directed graph

Mark Jelasity

University of Szeged, Hungary, and Hungarian Academy of Sciences

Vilmos Bilicki

University of Szeged, Hungary

P2P botnets are a challenge to the security of the Internet that is rather difficult to underestimate. Considering the fact that P2P botnets have not even begun to fully utilize the increasingly advanced P2P techniques for their advantage, the future seems even more challenging.

State-of-the-art approaches for detecting P2P botnets rely on considerable human effort: a specimen of the P2P bot needs to be captured and reverse engineered, message exchange patterns and signatures need to be extracted, the network needs to be crawled using tailor-made software, its indexing mechanism poisoned, or otherwise infiltrated, and a countless number of creative techniques has to be applied such as visualizations, identifying abnormal patterns in DNS or blacklist lookups, understanding propagation mechanisms, and so on (e.g., [23,5,3]). Besides, aggressive infiltration and crawling introduces lateral damage: it changes the measured object itself very significantly [12].

While creative and knowledge-intensive approaches are obviously useful, it would be important to be able to detect and map botnets automatically, and as generically as possible. Ideally, network monitoring and filtering tools installed at routers and other network components should take care of the job with very little human intervention based on the (often enormous volumes of) Internet traffic constantly flowing through them.

This automation problem has been addressed in the context of IRC-based botnets [26] and, recently, also in the context of detecting generic botnet activity [4] and, specifically, P2P traffic [8].

In this paper we examine how techniques presented in [8] perform in the case of botnets. Instead of looking at real traffic traces, we base our methodology on simulation: we define synthetic flows on top of an AS-level model of the Internet assuming various P2P botnets. This is necessary because our main goal is not to evaluate current botnets, but rather to explore some advanced P2P techniques such as localization and clustering that future P2P botnets are likely to adopt to avoid detection.

Our main contribution is demonstrating that P2P botnets can easily hide their traffic even at the largest backbone router if they apply a few P2P techniques that are available from the literature or that are fairly evident, while being able to maintain their overlay and hence their malicious activity as well.

The implication is that local and isolated efforts are not very promising; if we would like to protect the Internet from P2P botnets that are likely to increase in size and sophistication, and that are still very far from their full potential, we need to fight fire with fire and start to devote serious efforts to the consideration of P2P infrastructures and algorithms for automated detection.

If we want to identify and filter P2P botnet traffic in an automated way, we can focus on roughly three kinds of activity: propagation, attacks by the botnet, and overlay traffic, which involves repairing failed overlay links, adding new nodes to the overlay, and spreading and searching commands of the botmaster.

We argue that the most promising approach is to focus on overlay traffic. It has a small volume, but it is arguably the most regular and reliable traffic any P2P botnet generates, since the overlay network has to be constantly repaired, and bots need regular information about commands of the botmaster, updates, and so on.

Although the propagation of bots can be detected if it involves port scanning and similar suspicious network activity (e.g., [1]) bots can also spread under the radar via email, websites, file sharing networks, ad hoc wireless networks, or even via the old-fashioned way by infecting files on pen drives and on other portable media that generate no network traffic at all [15,27].

Certain ``visible'' attacks--such as DDos, spamming or brute force login--could also be detected automatically. In an optimistic scenario, we can immediately identify and block those bots that participate in these attacks. But this is still far from enough: P2P overlays can apply very cheap and simple methods that can enable them to tolerate extremely well if large portions (even 80%) of the overlay gets knocked out [10]. In addition, certain types of malicious activity, such as collecting personal data (bank account information, passwords, etc) can blend into (even piggyback) overlay traffic perfectly.

One interesting idea is that--instead of concentrating just on the overlay or only on attacks--we should look for a correlation between groups of nodes that produce a similar flow-level behavior due to overlay traffic and groups that perform attacks, as proposed in [4]. However, as acknowledged in [4], such correlations could be reduced to a minimum by a sophisticated botnet.

The basic motivation of our work is that we think that the structure of P2P networks is a very promising, although difficult, target to try to detect. The structure is completely insensitive to actual flow characteristics: Nodes can mimic other protocols or they can behave randomly, but the traffic dispersion graph they generate must still reveal the overlay network they are organized in. Evidently, this structure will have to be correlated to malicious behavior as well.

Accordingly, in the rest of the paper we focus on overlay traffic.

Approaches to automated traffic classification and filtering typically start from observing packets or flows and classify them through the application of a stack of methods ranging from simple port-based filtering to sophisticated supervised or unsupervised machine learning and classification methods over packet and flow data. A summary of such methods is given in [22].

However, P2P botnet overlay traffic does not necessarily look malicious or harmful (in fact in itself it is neither), even if isolated and classified properly. It is essential to be able to identify this traffic as part of a network that, as such, makes it suspicious and could trigger a warning [2].

It has already been argued that it is very difficult to detect P2P traffic using packet or flow classification methods alone [8,7]. Most of the key characteristics of P2P traffic lie in the network defined by the flows, the so-called traffic dispersion graph (TDG). By building and analyzing TDGs of locally observable flow data after the classification phase, it is possible to extract important additional clues about the organization of an application and, for example, label them as P2P.

In [7], the TDG is defined on top of a set of flows ![]() that have the usual

format

that have the usual

format <srcIP, srcPort, dstIP, dstPort, protocol>.

The TDG is the directed graph ![]() where

where ![]() , the set of vertices,

contains the set of IPs in

, the set of vertices,

contains the set of IPs in ![]() , and

, and ![]() , the set of

edges, contains the edges

, the set of

edges, contains the edges ![]() such that there is a flow in

such that there is a flow in ![]() with

with

![]() and

and

![]() .

.

Since the present work intends to challenge the feasibility of local methods for detecting botnets, in order to be convincing we need to be generous to the local methods that are available for traffic classification. Therefore we assume that traffic that belongs to a given P2P botnet can be isolated in an unlabeled way. That is, we assume that there are methods available to group a set of flows together that belong to the P2P botnet, but that we cannot determine whether the identified class of traffic is in fact botnet traffic.

Note that this is already an extremely strong assumption. We will argue that even with this assumption, it is very difficult to identify the traffic as P2P traffic generated by a large P2P network if a P2P botnet applies certain P2P techniques. To show this, we examine how the P2P traffic identification approach presented in [8] performs in this context.

There is a huge number of design options for P2P overlays [16], so selecting a suitable model that allows us to draw conclusions on the detectability of P2P botnet overlay traffic in general is highly non-trivial. First we give a very brief bird's-eye-view of existing overlay designs, and based on this overview we propose a simple model. Finally, we present techniques that could help a P2P botnet to hide; we will examine these techniques in our experiments in Section 5.

Structured overlays are distributed linked data structures designed for efficient routing (ID search). Nodes have unique IDs that can be used to address them. The overlay is organized to make efficient routing to a given ID possible. All versions of these overlays can be described as having a local structure based on some metric (often a ring, along which the IDs are ordered) and long range links that serve as shortcuts. Shortcuts are typically arranged in a way that is consistent with the optimal arrangement described in [14]. Current P2P botnets are based on structured overlays such as Kademlia [3].

Unstructured networks are extremely robust, and, due to their lack of structure, they can be harder to discover as well. However, command and control operations are more expensive; and, most importantly, communication cannot be localized (an important technique we describe below) since we have no structure to map on the underlay. Although we do not rule out unstructured networks as a potential architecture for botnets, here we focus on structured networks.

As a model we use an ordered ring with exponential long range links,

a simplified version of the Chord topology [25]:

We have ![]() nodes with IDs

nodes with IDs

![]() .

Node

.

Node ![]() is connected to nodes

is connected to nodes

![]() and

and

![]() to form the ring.

In addition, node

to form the ring.

In addition, node ![]() is connected to nodes

is connected to nodes

![]() for

for

![]() , which are the long range links.

, which are the long range links.

It is important to make a distinction

between the overlay and the flows that exist in the overlay.

Two nodes ![]() and

and ![]() are connected in the overlay if

are connected in the overlay if ![]() ``knows about''

``knows about''

![]() .

This, however, does not imply that

.

This, however, does not imply that ![]() will ever actually send a message

to

will ever actually send a message

to ![]() .

For example,

.

For example, ![]() might remember

might remember ![]() simply in order to increase robustness in

case of a failure.

On the other hand, a node

simply in order to increase robustness in

case of a failure.

On the other hand, a node ![]() might send a message to

might send a message to ![]() even though

even though

![]() is not the neighbor of

is not the neighbor of ![]() in the overlay (that is, for the overlay to

function properly,

in the overlay (that is, for the overlay to

function properly, ![]() does not need to remember

does not need to remember ![]() after sending it a message).

For example, in Kademlia if

after sending it a message).

For example, in Kademlia if ![]() wants to find the node of ID

wants to find the node of ID ![]() then

then

![]() will actually contact all nodes on the route to

will actually contact all nodes on the route to ![]() .

This is why Storm bots generate so many messages locally as

part of the overlay traffic [5].

.

This is why Storm bots generate so many messages locally as

part of the overlay traffic [5].

In short, we want to model the flows and not the overlay per se, so our model refers to the flows we can potentially observe. In the actual overlay there would probably be links to the 2nd, 3rd, etc, neighbors in the ring as well that are learned from direct neighbors.

In the following we describe two fairly straightforward techniques that future botnets could use to hide their traffic. The key point is that, using these techniques, the functionality of the overlay can be preserved while using far fewer links and traversing fewer routers.

In the ring every node has two neighbors that it actually

communicates with at any given time, but it has ![]() long range links all of which are frequently used for

communication to achieve as few as

long range links all of which are frequently used for

communication to achieve as few as ![]() hops in

overlay routing (where

hops in

overlay routing (where ![]() is the network size).

Evidently, the ring would be sufficient for communication but

then sending a message from a node to another random node would

require

is the network size).

Evidently, the ring would be sufficient for communication but

then sending a message from a node to another random node would

require ![]() hops in expectation.

hops in expectation.

There is a middle ground: we can reduce the number of

long range links to a constant number, and still have

relatively efficient routing: ![]() hops [19] or even

hops [19] or even

![]() hops [18].

hops [18].

However, let us remember that we are interested in the flows and

not the overlay.

In fact, we can modify our model to have a single long range flow per node,

and still have ![]() hops for routing messages in expectation.

The trick is to create clusters of

hops for routing messages in expectation.

The trick is to create clusters of ![]() consecutive nodes in the

ring, and allow each node to actually use only one of its long range links.

Routing proceeds as usual: but when a node decides to send a message

over a long range link, it first has to locate the node in its

cluster that is allowed to use that link and send the message to that node

along the ring.

Note that nodes that are in the same cluster can rely on an identical set

of long range links since clusters can be interpreted as replicas of a node in an overlay of

size

consecutive nodes in the

ring, and allow each node to actually use only one of its long range links.

Routing proceeds as usual: but when a node decides to send a message

over a long range link, it first has to locate the node in its

cluster that is allowed to use that link and send the message to that node

along the ring.

Note that nodes that are in the same cluster can rely on an identical set

of long range links since clusters can be interpreted as replicas of a node in an overlay of

size ![]() .

.

Finally, we state without proof that a much simpler stochastic approach

in which we have no clustering at all, but where each node can use only one

random long range

link results in a similar routing complexity in expectation.

Here a node has to

look at its ![]() neighbors in the ring and pick the best long range link

that is allowed in some of these neighbors.

neighbors in the ring and pick the best long range link

that is allowed in some of these neighbors.

In sum, from the point of view of flows all nodes now have two ring flows (one in and one out) and two long range flows on average (one out and one in on average).

We can also optimize the ring by trying to assign IDs to nodes in such a way that the resulting ring has links which touch the smallest possible number of routers. Several algorithms are known for achieving such optimized topologies that could be adapted to this application, for example [20,9].

To examine the partial TDGs as seen locally from several points of the Internet, we (i) created a static AS-level model of the Internet topology and routing, (ii) mapped the overlay network to this AS-level topology, (iii) and we analyzed the local TDGs that are defined for each AS by the set of traversing flows in our model. We will now elaborate on these steps.

As our AS-level underlay we used an AS link dataset from CAIDA [6] that we cleaned by deleting uncertain links (around 3% of all links). We are aware of the methodological problems with collecting AS-level links and simulating protocols over them. However, for the purposes of this study, the main goal was not to achieve perfect low level realism but to capture the important structural properties of the Internet as a complex network, a level that even a good topology generator could provide.

We calculated the shortest paths for each pair of nodes in the topology after assuming that edges have equal weights. As a simple model of BGP routing we assumed that flows actually follow these shortest paths. Shortest paths also define the betweenness centrality of each node, that is, the number of shortest paths that touch a given node. This is a very important metric from the point of view of TDGs, since an AS with a high betweenness value is likely to be able to capture a more complete view of the TDG of the application.

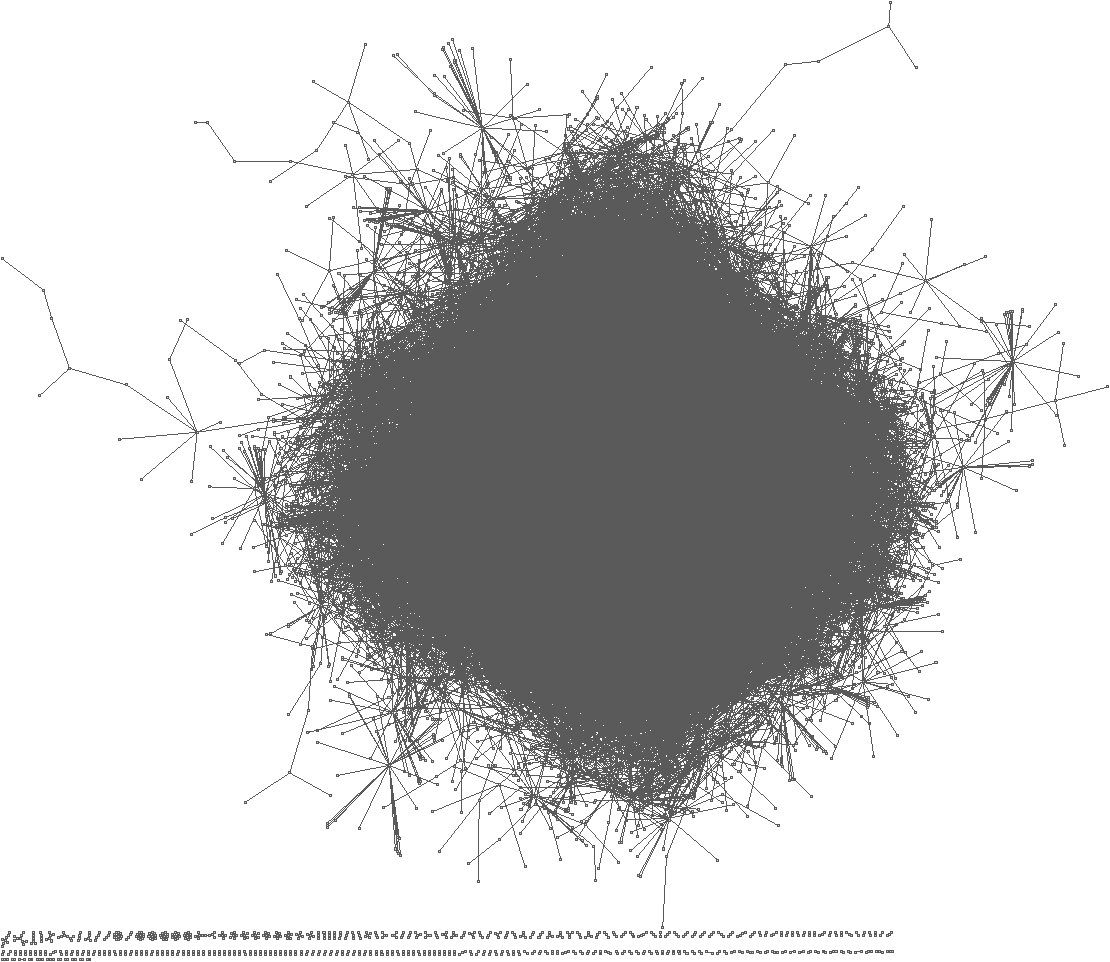

The statistical properties of the AS graph have been studied intensively (see for example [17]). Figure 1 illustrates the distribution of betweenness centrality in the dataset we used, which is presented because we found the sharp switch to an exponential relationship at rank 1700 interesting.

![\includegraphics[width=\columnwidth]{figs/between}](img23.png) |

Based on notions described in Section 4, we experiment with two kinds of mappings: random and localized. First we describe the common settings for these two mappings, and then we discuss the specifics of both.

The AS topology contains 14,630 nodes. With each type of overlay we map the overlay nodes onto the AS nodes in such a way that the number of overlay nodes in each AS is proportional to the size of the AS, but each AS has at least one overlay node. That is, in our model we do not take into account the geographical, social, or cultural bias that is known to affect botnet distribution [5]. The size of an AS is approximated based on the IP-prefix-to-AS mapping available from CAIDA https://www.caida.org/data/routing/routeviews-prefix2as.xml.

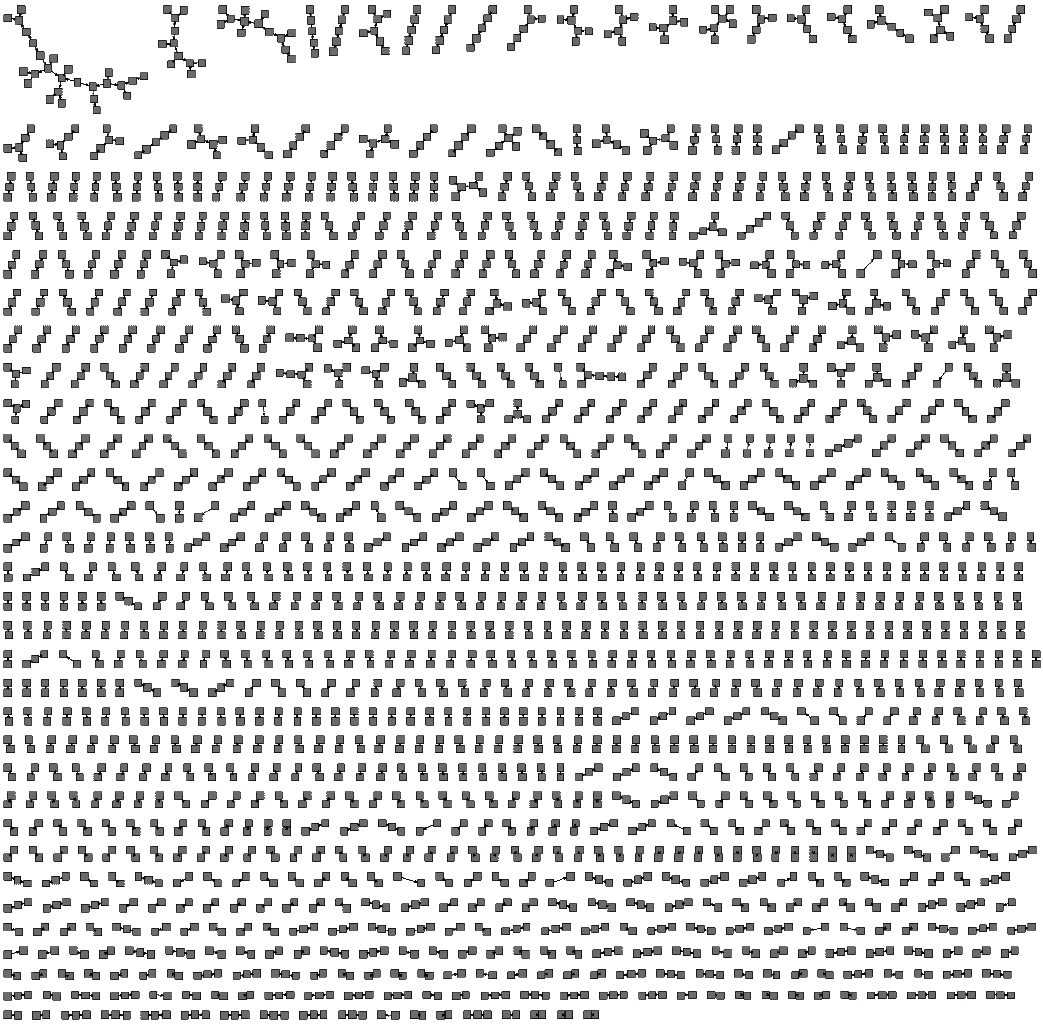

It is interesting to note that the size and the betweenness of an AS seem to show no correlation, as illustrated by the scatter plot in Figure 2.

![\includegraphics[width=\columnwidth]{figs/betweenness-vs-size}](img24.png) |

In the random mapping we assign overlay nodes to ASes at random, keeping only size-proportionality in mind, as outlined above.

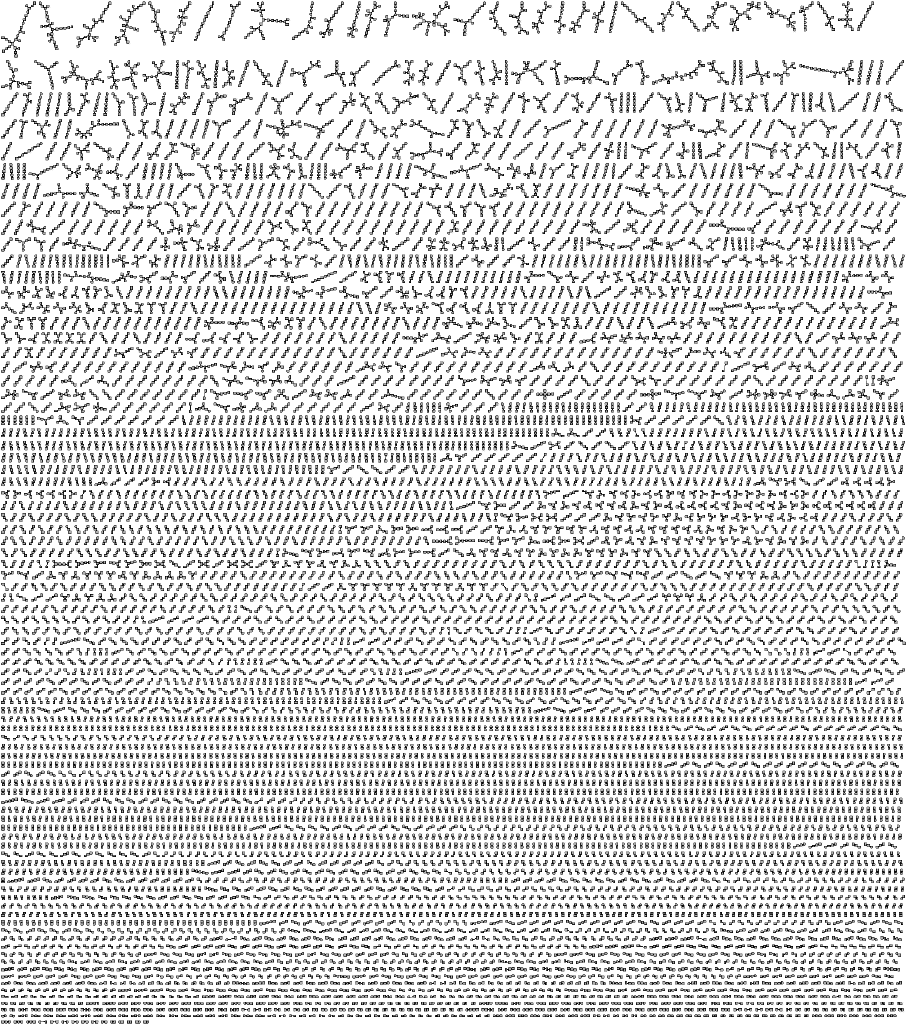

To create a localized mapping as described in Section 4.2.2, we first define a traveling salesperson problem (TSP) over the AS topology and, using a simple heuristic algorithm, we produce a ``good enough'' tour over the ASes. Finally, we assign the overlay nodes to ASes in such a way that the overlay ring is consistent with this tour as illustrated in Figure 3.

![\includegraphics[width=\columnwidth]{figs/locmap}](img25.png) |

We define the TSP as follows: find a permutation of the ASes such that if we visit all the ASes exactly once in the order given by the permutation, but assuming a closed tour that returns to the origin, and assuming also that for each transition from one AS to the other we follow the shortest paths in the AS-level topology, then the sum of the hops in the AS-level topology is minimal.

The heuristic we applied is nearest neighbor tour construction [11]. We start the tour with a random AS, and iteratively extend the tour by adding an AS that has the smallest shortest path length among those ASes that have not yet been visited. Ties are broken at random.

Before moving on to the analysis of TDGs, some comments are in order. First, our simulation is completely indifferent to the way a solution for the TSP problem is generated (i.e., a P2P algorithm or some other arbitrary heuristic method). What we would like to focus on is what happens when the mapping is sufficiently well localized.

Second, the heuristic mapping we produce is most likely quite far away from the optimal localization. The actual optimal mapping is prohibitively expensive to calculate since the TSP problem is NP-hard in general, and we have a very large instance. Moreover, the definition of the localization problem itself could be refined as well, taking the requirements of the P2P botnet into account more directly in the objective function, and, for example, minimizing the sum or the maximal number of flows that can be seen at the ASes.

For these reasons our results should be interpreted as an upper bound on the amount of information that is available at local nodes.

We experimented with four overlay models that are given by the two kinds of mappings described in Section 5.2.2 (random and localized) with or without the clustering technique described in Section 4.2.1.

For these models we simply collected the flows that traverse a given AS, created the TDG, and collected statistics. The statistics we collected were the following: number of nodes, number of edges, number of weakly connected components, size of largest weakly connected component, average node degree (where we count both incoming and outgoing connections) and finally, a metric called InO, introduced in [8]. InO is the proportion of nodes that have both incoming and outgoing connections.

The results are shown in Figure 4.

The first observation we can make is that the more efficient factor for

hiding the overlay traffic is clustering.

Recall, that the main effect of clustering is to reduce the flows

each node participates in from ![]() to 4 on average.

The effect of localization is significant as well, but it is less dramatic

overall.

There is one exception: the largest connected component, where localization

results in a value that is two orders of magnitude smaller for

the two most central ASes.

to 4 on average.

The effect of localization is significant as well, but it is less dramatic

overall.

There is one exception: the largest connected component, where localization

results in a value that is two orders of magnitude smaller for

the two most central ASes.

Let us first compare these results to those found in [8] for existing P2P networks in real traces. There, it was concluded that P2P traffic can be characterized by a high InO value (larger than 1%) and a high average degree (larger than 2.8). From this point of view, the TDGs we observe do not classify as P2P traffic, because the average degree is extremely low: in fact less than 2 in the case of the localized and clustered network even for the most central ASes. It is interesting that even for the random mapping without clustering the threshold is crossed only at the most central ASes, although by a large margin.

On the other hand, the InO values are high. This is simply because we did not pay any attention to deceiving this metric explicitly. The reason is that in practice determining the direction of a flow is not very reliable, is prone to errors and quite possible to manipulate. We predict that the InO value could also be manipulated by a botnet using techniques that cannot be captured by the relatively high level model we apply that ignores flow details and dynamics.

In addition, in [8] some applications with high InO and low average degree have been found: one example is FTP, where the server initiates connections to the client as well, which further complicates detection and offers the botnet other opportunities for camouflage.

Of course it is possible that other metrics could help characterize these TDGs as belonging to a P2P network. Let us look at the TDGs using other metrics in order to have a more precise idea of what information is visible locally. Out of the 200,000 edges in the overlay, even the most central AS can see only 16,814 edges. The number of nodes in the TDG is 24,985 which is much larger than the number of edges: indeed, the connected components are mostly of size 2 (pairs) and 3. There are 8,172 clusters, the maximal of which contains only 29 nodes. A visualization of the TDG that belongs to the most central AS is shown in Figure 5. The information available at the less central ASes is significantly less, as shown in Figures 4 and 5. Finally, the maximal node degree we observed in any TDG we have generated is no more than 4.

AS3491 (betweenness 4,460,142), random  AS3491 (betweenness 4,460,142), localized, clustered  AS174 (betweenness 48,904,554), localized, clustered

|

It is important to emphasize that results presented here are based on the assumption that within one AS transit traffic traces can be aggregated and treated in a unified way. Although not impossible, this is a rather strong assumption, especially for the most interesting ASes with high betweenness centrality, that handle enormous volumes of transit traffic. In practice, the information visible locally could be even more fragmented.

Overall, then, we may conclude that when localization and clustering are applied, the overlay network traffic is almost completely hidden. A non-trivial proportion of the traffic can be seen only at the most central ASes, but even there, what is visible is predominantly unstructured.

Instead of looking at existing P2P botnets, we created synthetic flow data to model the set of flows that are available at an AS locally for observation. While it is clear that this methodology involves a simplified model of communication, it does allow us to get ahead of botnets via experimenting with algorithms that have not yet been deployed.

In spite of the low resolution that this methodology offers, we were able to predict and analyze a real problem: P2P overlays that are capable of efficiently and robustly organizing and controlling a large set of bots with a minimal communication footprint so as to avoid automated detection.

We hope that our results will provide non-trivial clues concerning directions for future research in automated botnet detection. Our results also show that we need to fight fire with fire and develop and apply P2P technology over large sets of cooperating administrative domains.

M. Jelasity was supported by the Bolyai Scholarship of the Hungarian Academy of Sciences.

This document was generated using the LaTeX2HTML translator Version 2002-2-1 (1.70)

Copyright © 1993, 1994, 1995, 1996,

Nikos Drakos,

Computer Based Learning Unit, University of Leeds.

Copyright © 1997, 1998, 1999,

Ross Moore,

Mathematics Department, Macquarie University, Sydney.

The command line arguments were:

latex2html -split 0 -show_section_numbers -local_icons -no_navigation all

The translation was initiated by Mark Jelasity on 2009-03-23